Do you all think that will happen in the near future?

Böge: I think of course the standardization we saw in machine vision is also happening in automation with OPC UA or time-sensitive networking. Many of these standards are also highly interesting for vision. Even though our machine vision cameras do not have Profinet, Ethercat or other standards directly, many of them are connected to a vision system that itself is integrated in such a network. So thinking outside of your own range is very important.

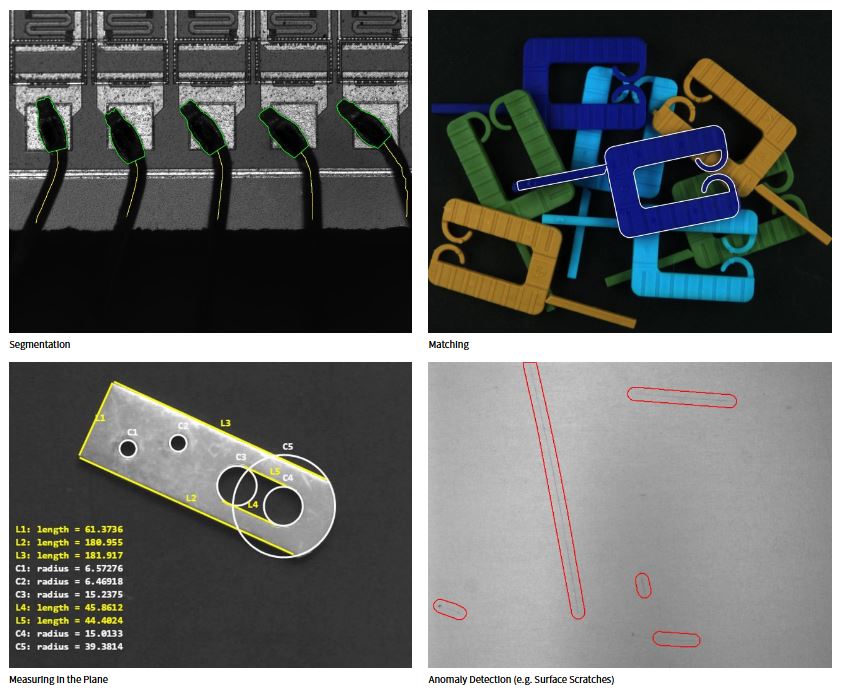

Longval: What I like about automation is that it is well contained so it is possible to introduce new technologies. In vision applications that is a lot harder because there are more factors that are outside of your control, for example in non-traditional applications like traffic. So in cameras in automation you can see these innovations like AI processing or non-visible light applications first.

What role will OPC UA play?

Wiesinger: For most PC-based camera manufacturers it is already part of their development and it might be important for more intelligent cameras in the future. But of course we will not get rid of Profinet, Powerlink or Ethercat yet.

Waldl: As cameras become more intelligent, OPC UA can of course smooth the road from camera to smart camera. And of course with TSN for high-speed synchronization becoming a topic it is very important for us.

Böge: As I elaborated before, if you are connected to a PC with your standard machine vision camera it seems like it is not in your back yard, so to speak. But I’m still convinced you have to be very close to these kinds of trends and technologies and understand them because they might bring those requirements towards you. For example, we integrated the precision time protocol years ago into our GigE-cameras which is similar to TSN and other high speed synchronization protocols. Even if you probably won’t integrate OPC UA into a standard machine vision camera as protocol these underlying requirements have to be looked at very carefully.

What impulses will come from the embedded market for machine vision? What trends do you see?

Böge: It starts with the definition of ‚embedded‘ which is very fuzzy at some point. But definitely the shrinking and higher performance we see on the processing side is also visible in cameras. Of course that does not mean for every embedded processing unit you need a board level camera as well.

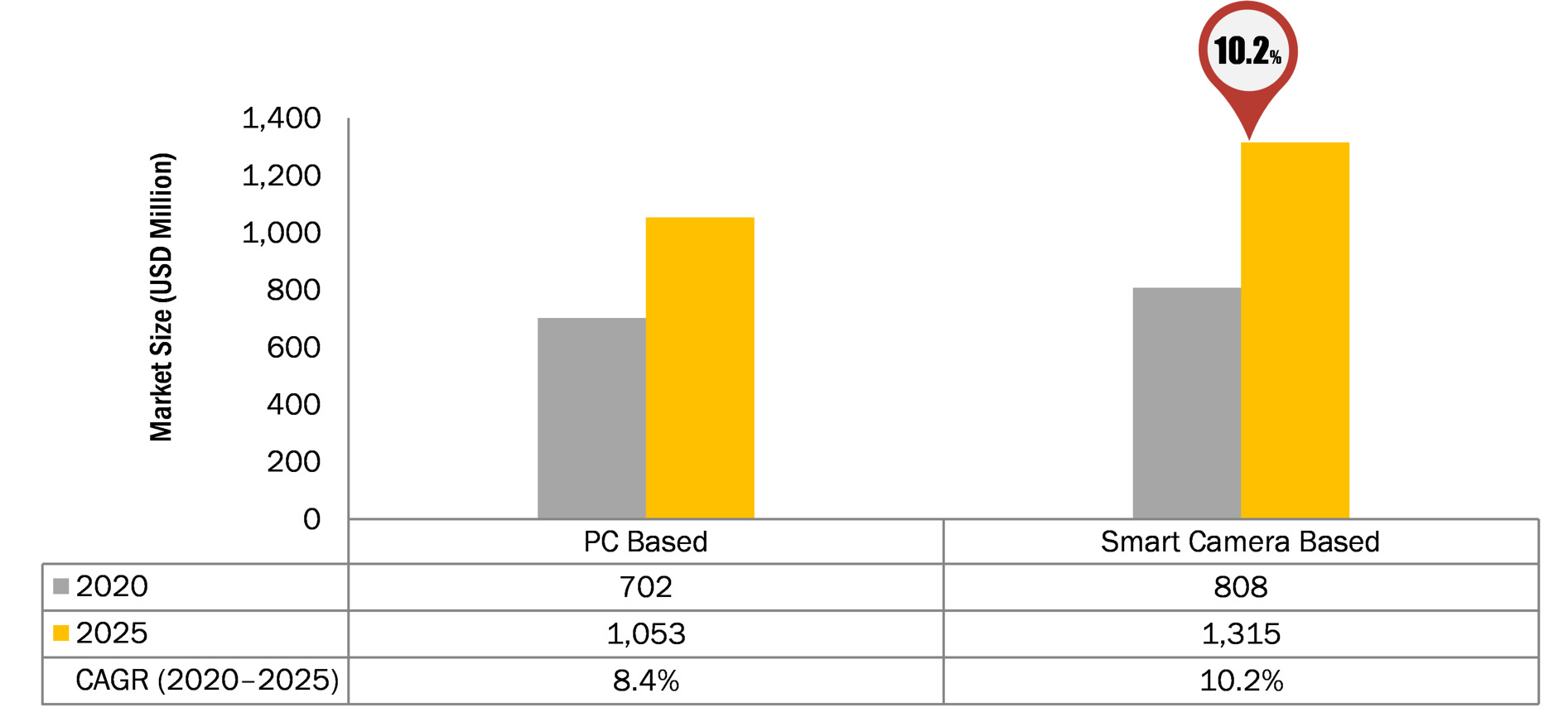

Wiesinger: I think in the past deep learning and AI hyped up the embedded market. There were a lot of users from the software side with relatively little knowledge of hardware or camera technology. So the big challenge in this market is to provide more standardization to make it easier for the customers to use the hardware with the software.

Waldl: More power, more flexibility and lower cost go naturally with a robust integrated industrial solution. A low-power but powerful integrated inference controller was unthinkable a few years ago but today there are many suppliers in this field. That’s why inference controllers will be a standard function of any industrial camera in the near future.

Longval: I think we are going to see more technologies coming from the embedded market to our space because we are good at adapting them. GigE for example came from the consumer IT space and we adapted it to our domain. For us it is a good way to bring in new technologies from high volume markets like systems-on-chips.

What role do software and programming tools play in the near future?

Longval: The camera in the old days used to be a sensor, a logic board and a back camera interface of some sort, but it already isn’t like that any more. There is more software in play already, so it’s more of a solution than a camera. And we are going to see more of that because customers want to have the ability to either work with us closely or take what we have and do their own things regarding modules and applications. So to make the software and tools available will be very important.

Wiesinger: You always have to be on top of these new software tools and different operating systems as a camera manufacturer. You also have to be fast to integrate them and make it as easy as possible for your customers who are used to applying these new tools.

Böge: I agree completely, we can already see this fragmentation or high variety on the software side, so you have to integrate Programming Languages like Python or ROS in your SDK and make it a standard. We have been doing it with our Pylon SDK for years now and see that as a key benefit for our customers. This will be even more important in the future with software running on the camera and embedded system in general. You can’t just give your SDK to your customers and let them try to connect it to third party libraries on their own. At least you have to test all these possible combinations and ensure that it feels for customers like coming from a single source.

Waldl: I would just add that many of the newer sensors have features that are often not provided by camera manufacturers. The tools should also provide access to these options.