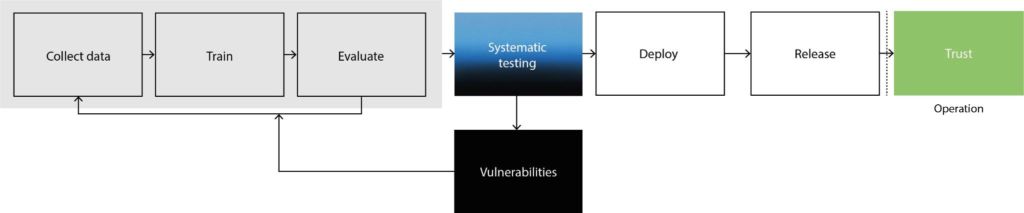

At the moment we are observing a paradigm shift in the machine vision space. The idea of using machine learning (ML) – or its more advanced form deep learning – to build a new, 2nd generation of AI-driven machine vision systems that promise powerful applications. But not so fast. When we created the first generation of machine vision, we relied on traditional software code to express behaviour. In particular, the complexity of software code and testing practices went hand-in-hand. We wrote a function and tested it. We created a class and tested it. We defined an API endpoint and tested it. Through a well-defined process, when we shipped code, we could be confident that it worked as expected. We followed what is known as a test-to-ship strategy. Unfortunately, that well-defined process no longer works for the new generation of machine vision with ML as its foundation.

When developing ML-driven machine vision systems, we now start with gigantic models and datasets to learn statistical dependencies at scale. We don’t explicitly express behaviour through software code anymore and instead of growing over time, the complexity is there from day one. As a result, creating complete quantitative testing and release processes is extremely time-consuming and requires specialized knowledge. So most teams end up carefully developing and using selective, manual testing until their ML systems work ‚well enough‘ – and then they end up breaking in the real world. This ship-to-test strategy means that we currently test ML after it has been shipped to customers. So our current development practices of ML run at the risk of ruining customer experiences, damaging reputations, and putting people’s safety at risk. Above all, they definitely places a massive burden on innovation.

Where do we go from here?

Given the complexity of ML, selective and manual testing doesn’t cut it. Companies that want to build the next generation of machine vision applications must adopt a test-to-ship mindset to understand the capabilities and limitations of their systems before deployment. ML systems require a new type of data-driven quality infrastructure to put transparency at the core of all development stages. Development teams must understand the performance of their solutions holistically. With infrastructure that makes ML transparent, we can measure system reliability and robustness, surface biases in models and data, and create a basis for communication with non-technical stakeholders and customers.

Companies now face the good-old build or buy decision. Should they invest in building their own quality infrastructure and maintain it over time? That requires resources and specialized knowledge. The alternative – turning to existing tools – brings us to the rapidly maturing MLOps field. A swath of companies have sprung up over the last five years to bring best practices from DevOps to the world of machine learning, including Lakera, Iterative, ZenML, V7 and Lightly, to name but a few. With the availability of these MLOps solutions, machine vision companies don’t have to shy away from ML. On the contrary, they can embrace it. A smart selection of MLOps tools allows even smaller teams to innovate safely.

Summary

Companies that will succeed at innovating with ML and machine vision will lead the next era of industrial applications. These innovations will be built on reliability, trust and transparency. The best companies will not only create completely new types of products, but will also be resilient to upcoming regulatory changes such as the EU AI Act. So knowing all of this, ask yourself: How are you going to build the future with AI?

www.lakera.ai