Today’s Large Language Models, such as GPT-4 and LLAMA, have dramatically reshaped how humans interact with technology, enhancing productivity, creativity, and accessibility. Yet, the next wave of evolution in LLMs promises even more profound implications. The future LLMs will continuously learn from interactions, dynamically adapting their responses to become deeply contextual and personal. These models won’t just respond to inputs – they will anticipate needs and integrate seamlessly with our daily lives, blurring the line between user and technology.

Vision LLMs: Bridging Perception & Understanding

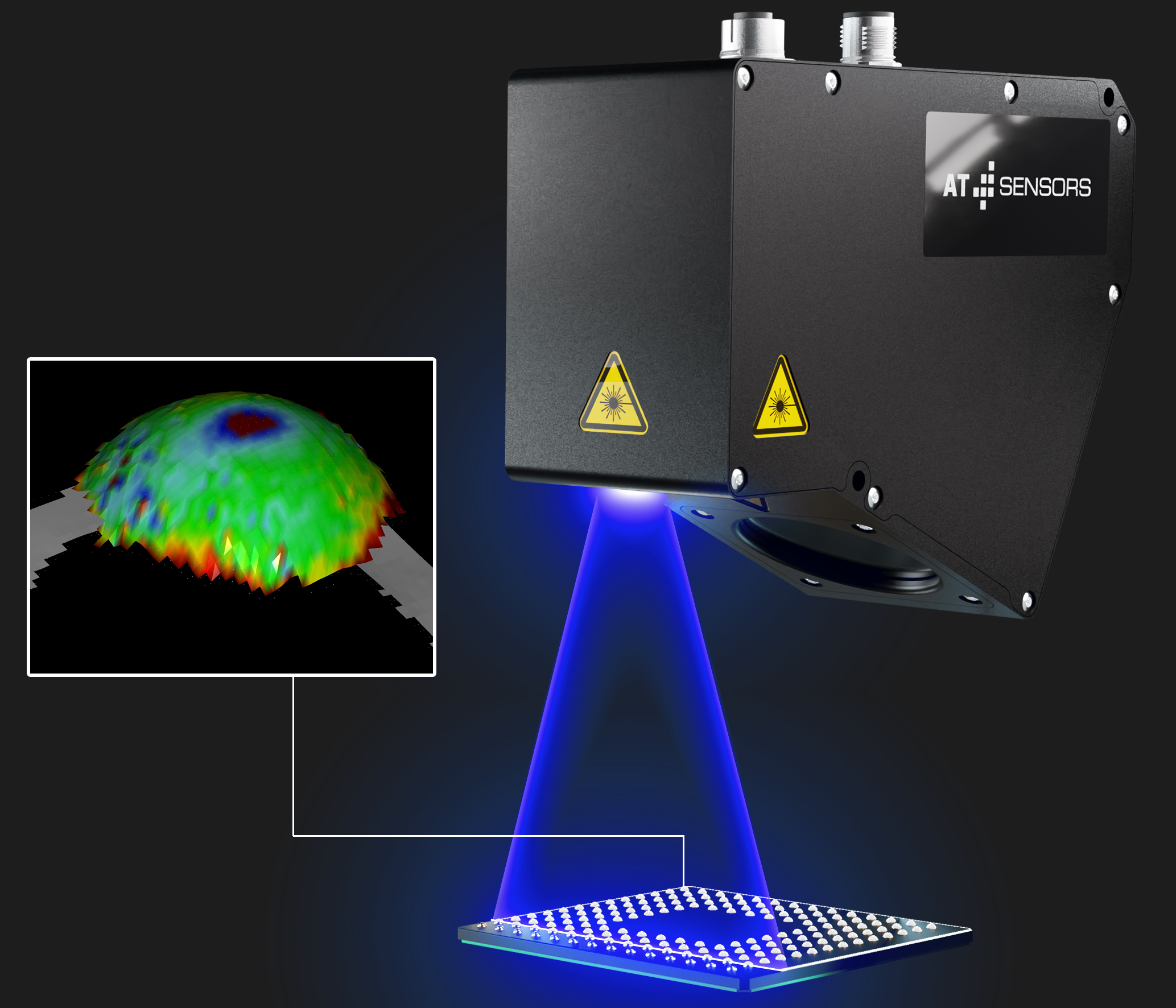

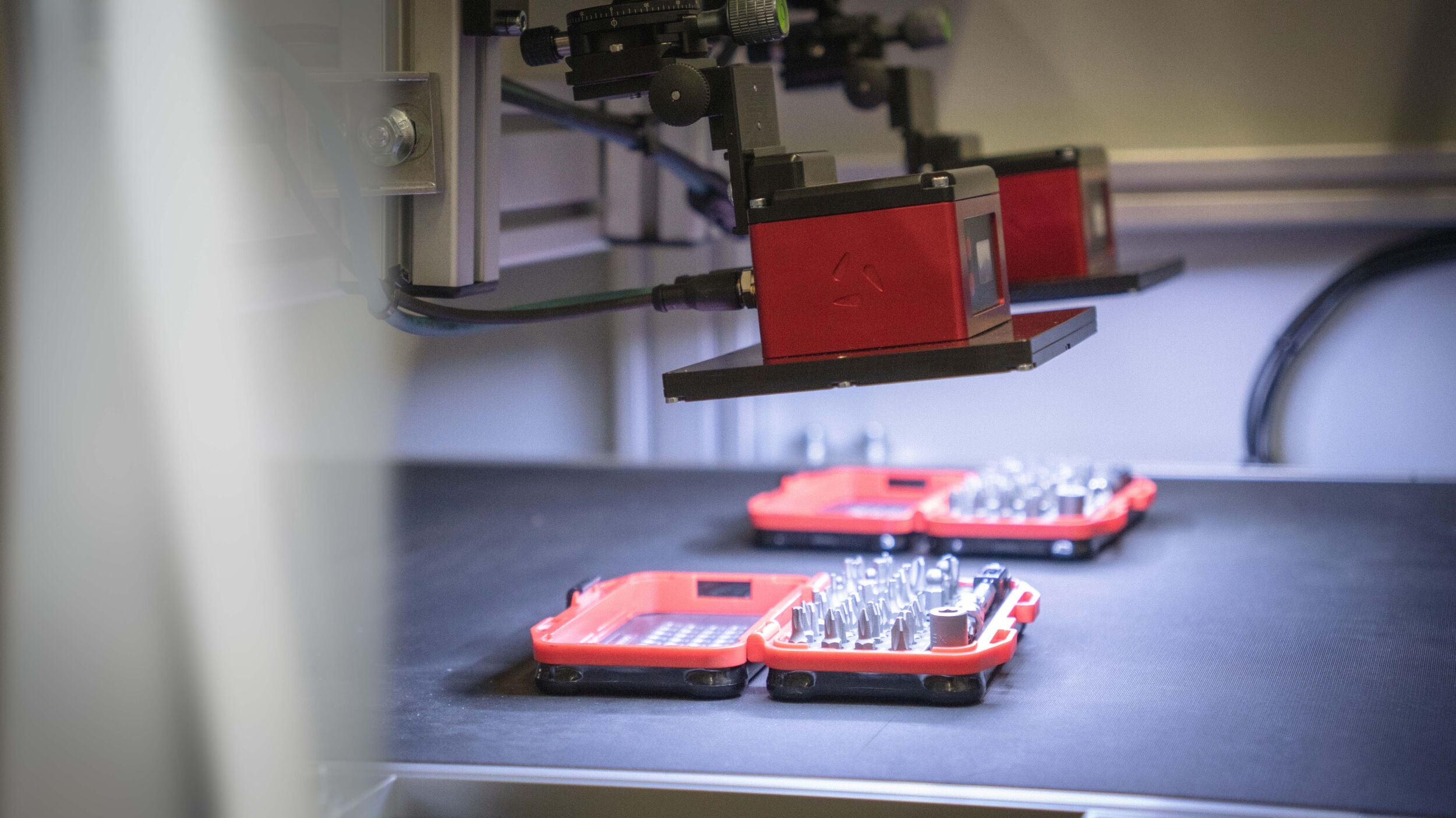

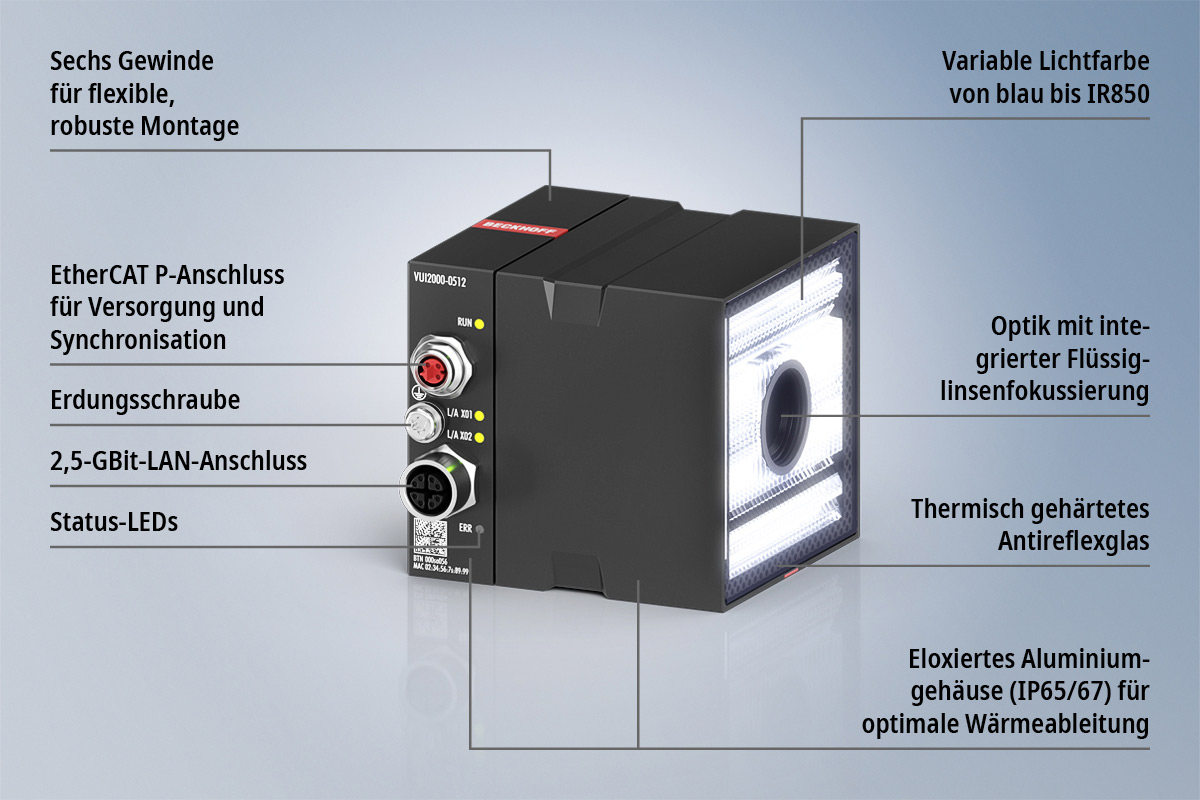

Vision-enabled LLMs like GPT-4V represent the convergence of visual and linguistic cognition, taking AI beyond simple perception towards genuine understanding. Future Vision LLMs will interpret complex visual scenarios and provide insightful reasoning, transforming applications in critical fields such as healthcare, automotive safety, and smart urban infrastructure. Instead of simply describing what they see, these systems will deeply understand context, predict outcomes, and recommend actionable insights, fundamentally enhancing human decision-making and creativity.

Multi-Modality: Integrating Human-Like Cognition

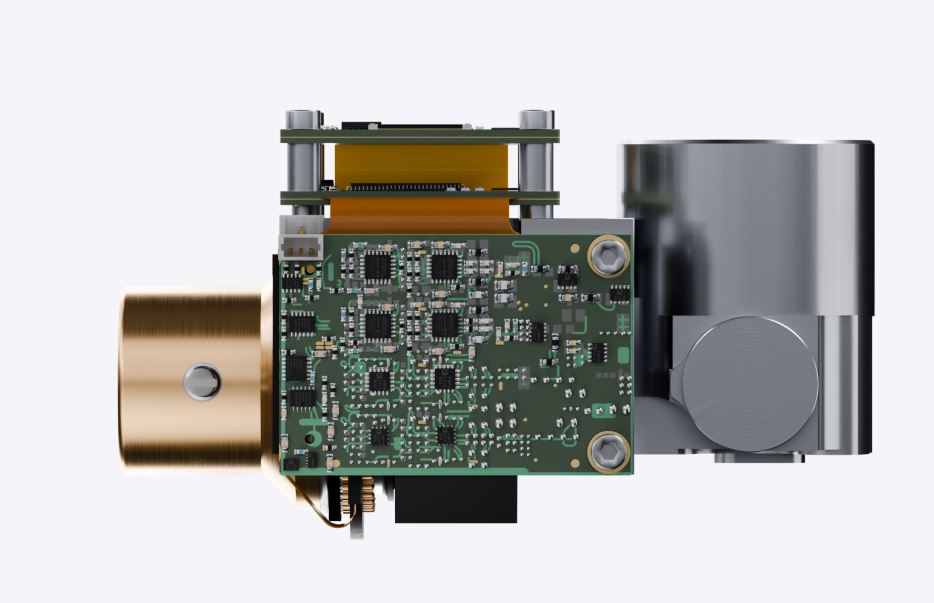

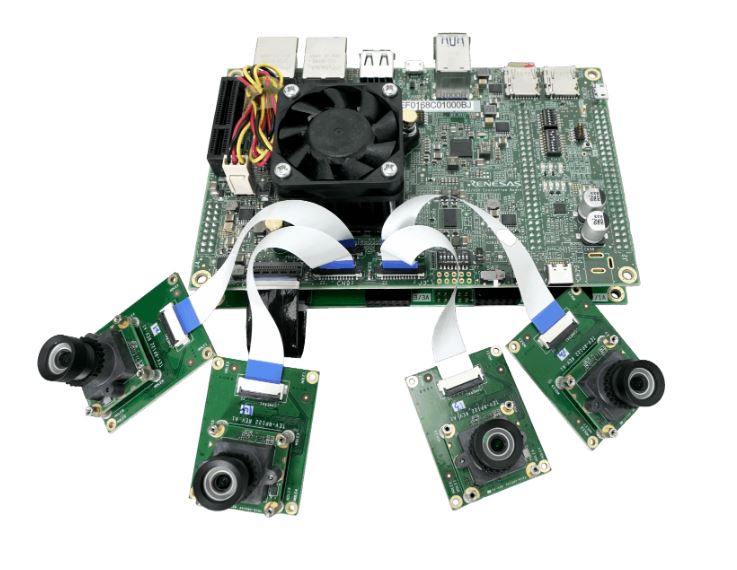

Multi-modality in AI refers to the integration of various types of data (text, images, audio, and sensor inputs) into a unified cognitive framework. This capability mirrors human intelligence, enabling AI systems to process diverse information simultaneously and coherently. Future multi-modal AI systems will effortlessly blend data streams, resulting in sophisticated understanding and reasoning across different sensory inputs. The implications of this are enormous, ranging from advanced robotics and personalized medical care to innovative forms of media and communication.

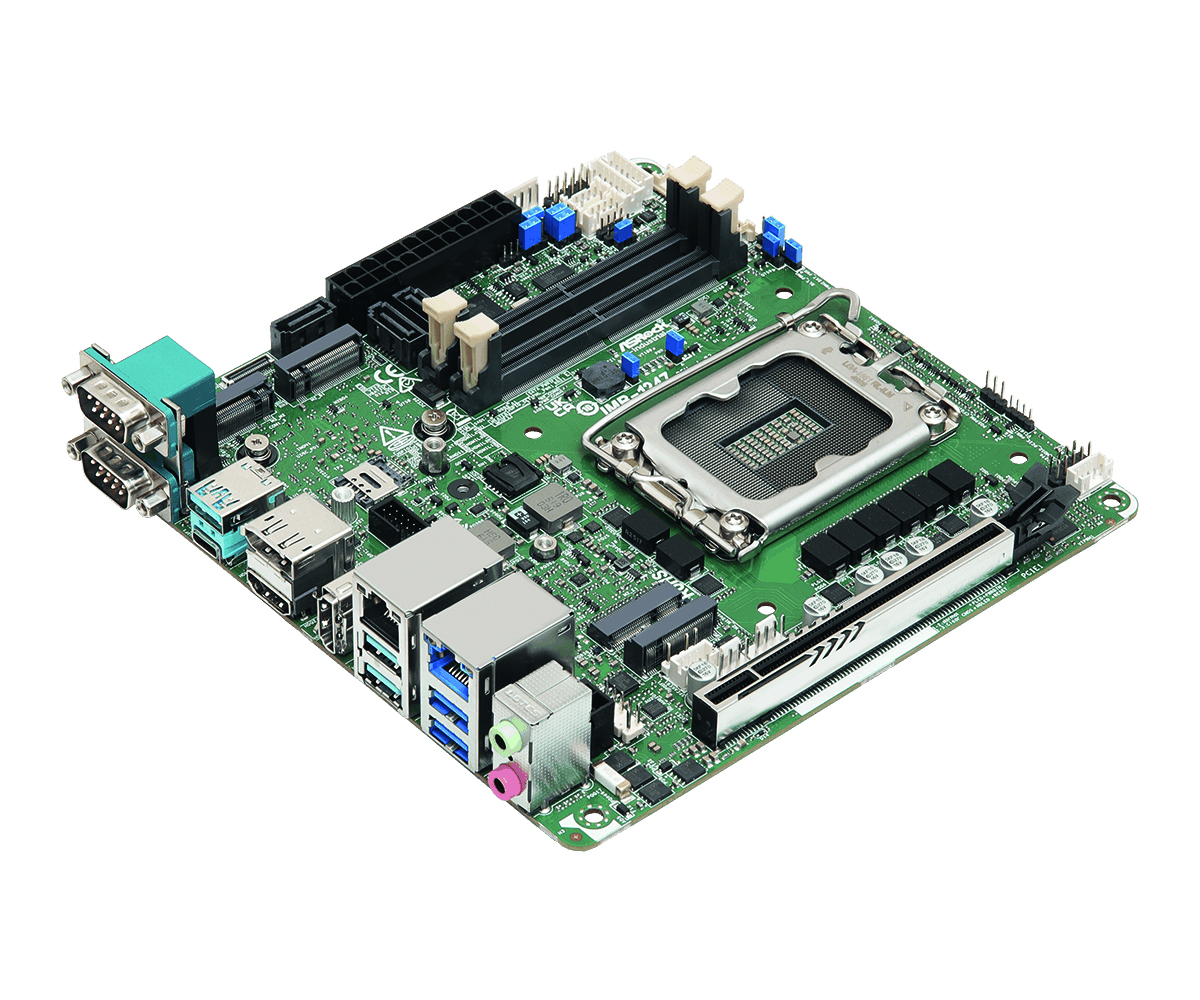

The Rise of AI Semiconductor Technologies

Realizing the vision of advanced, intelligent systems requires equally revolutionary AI semiconductor technology. This shift in computing architecture towards edge-based, on-device AI represents one of the most significant transformations in the industry. Companies like DeepX, Intel, Qualcomm, and AMD are at the forefront of this evolution, developing specialized chips designed to handle complex multi-modal AI tasks locally, with unprecedented efficiency. These next-generation AI semiconductors address fundamental challenges associated with latency, bandwidth limitations, and privacy concerns. By bringing powerful AI computation directly to devices, such as smartphones, autonomous vehicles, drones, and wearable technology, we achieve instant responsiveness, better privacy, and significantly reduced energy consumption.