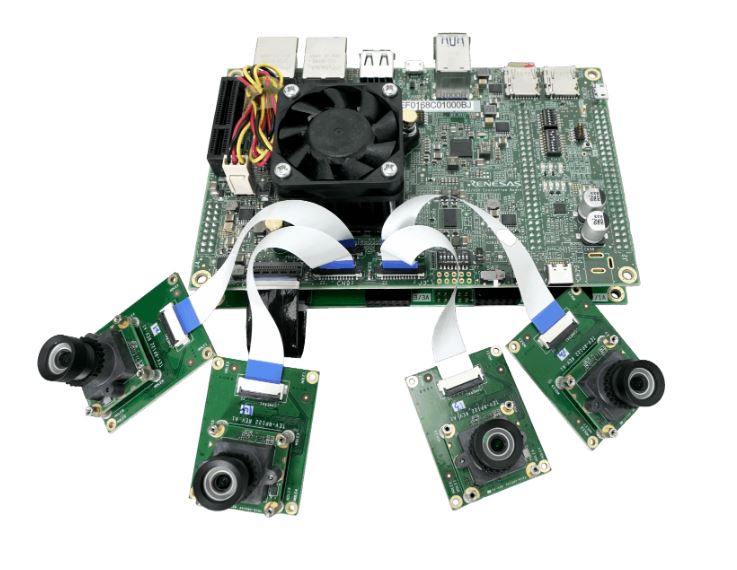

Every intelligent system starts with perception. A robot can only plan and act as well as the data it receives. But real environments are cluttered and constantly changing. Capture must be precise and repeatable. Unreliable inputs lead to conservative planning and unstable control. Macnica ATD Europe’s capture technologies provide the sensory foundation of autonomy. Through an ecosystem including industrial CMOS and global-shutter sensors, Time-of-Flight (ToF) modules, imaging processors, and strain and motion sensors, machines gain the ability to observe their surroundings, monitor their own movement, and measure internal conditions with high fidelity. These signals are often complemented by encoders, MEMS, radar, and acoustic sensing, integrated over low-latency interfaces such as SLVS-EC, MIPI CSI-2, and SPI. Together, they form a digital nervous system, feeding continuous streams of structured data into perception and control pipelines. As models move closer to the edge, capture is becoming more intelligent. Sensors increasingly pre-filter, timestamp, and contextualize data at the source.

Intelligence emerges from Integration

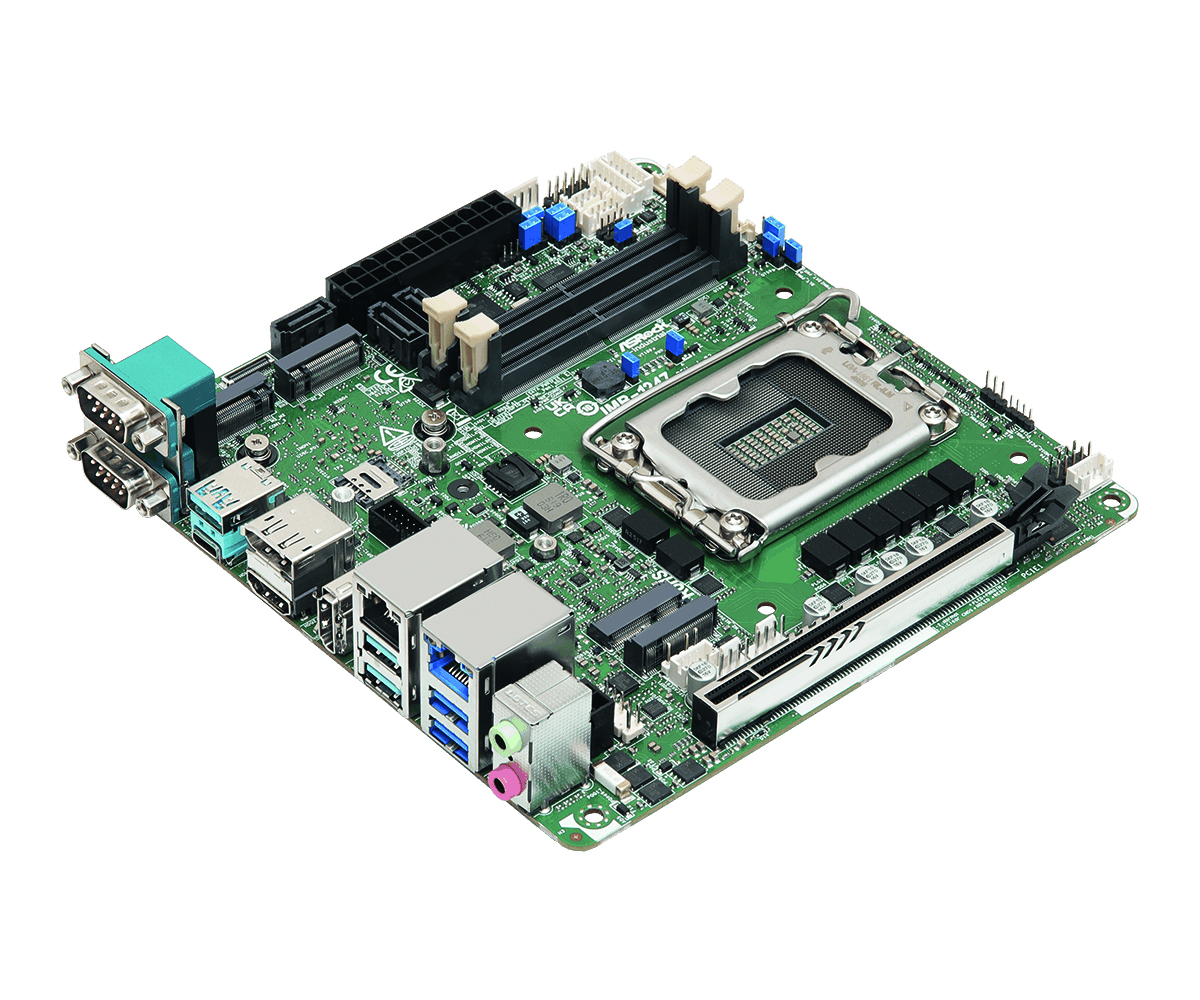

Captured data must be transformed into decisions locally and in real time. That requires processing that is fast, deterministic, and secure. Compute platforms for perception pipelines, sensor fusion, and AI inference are all needed. Architectures often combine FPGA and SoC acceleration with edge AI engines to handle high-bandwidth vision, synchronize multi-sensor inputs, and execute inference and planning with low latency. Openness and IP security are equally important. Customers can integrate licensed IP, add proprietary algorithms, or co-develop solutions while retaining full ownership of their software and intellectual property.

Autonomy does not come from sensors or processors alone. It emerges when capture and processing are engineered together with robust synchronization, validation, and lifecycle support. When sensing is consistent and processing is deterministic, control becomes predictable, and verification becomes repeatable. This is why perception, and computation must be treated as architectural decisions, not late-stage components.

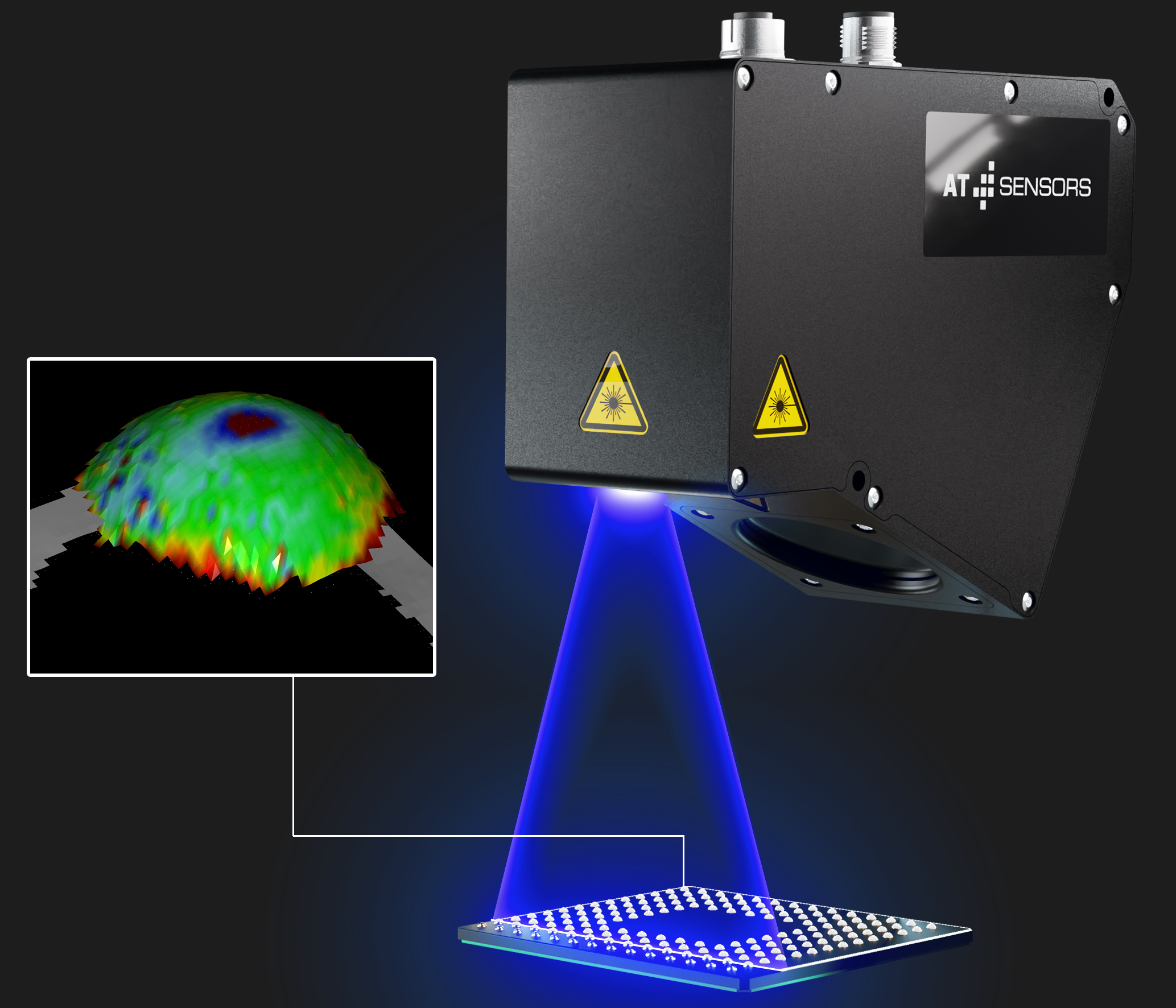

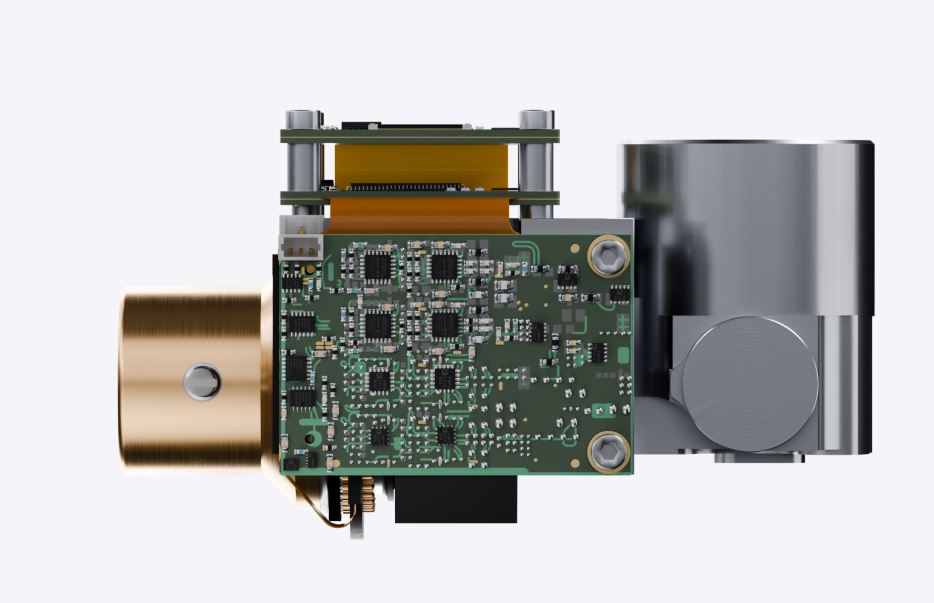

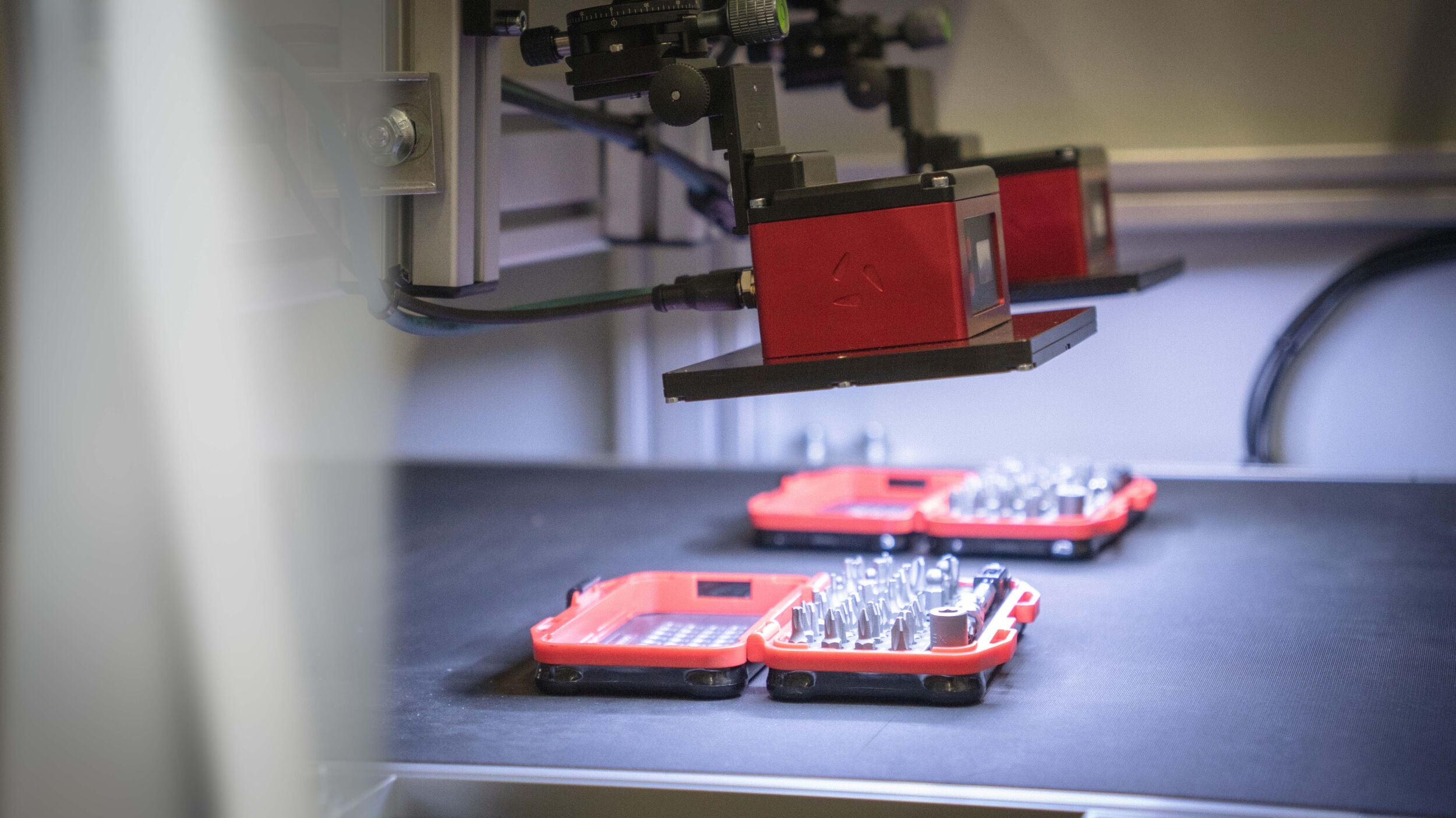

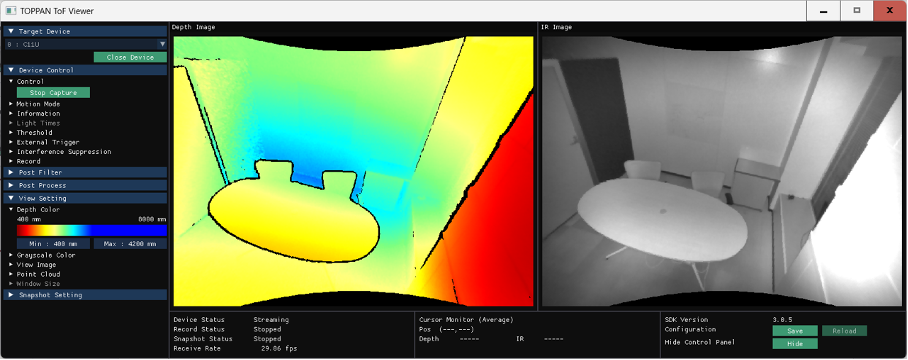

Making Depth Deterministic with ToF

Depth is a clear proof point of why integration matters. 2D vision can misread distance under harsh lighting, reflective surfaces, motion, and clutter, increasing risk in tracking, planning, and collision avoidance. ToF measures distance directly by timing reflected light, producing dense depth fast enough for real control loops. Production-grade Hybrid and short-pulse ToF adds robustness with ambient light suppression, high-speed 3D capture up to 120fps to reduce motion artifacts, and interference cancellation for multi-camera scenes. The result is consistent depth across environments, fewer edge cases, and faster validation.

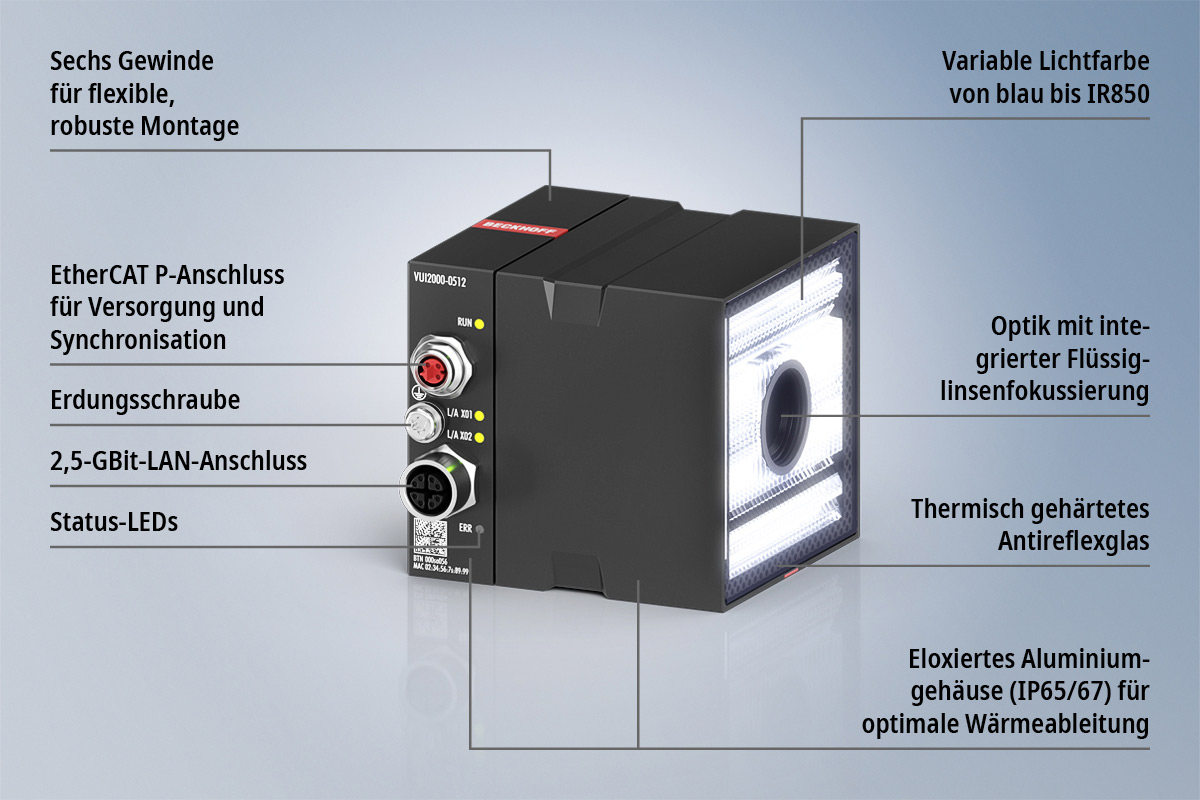

Precision Depth with Hybrid ToF

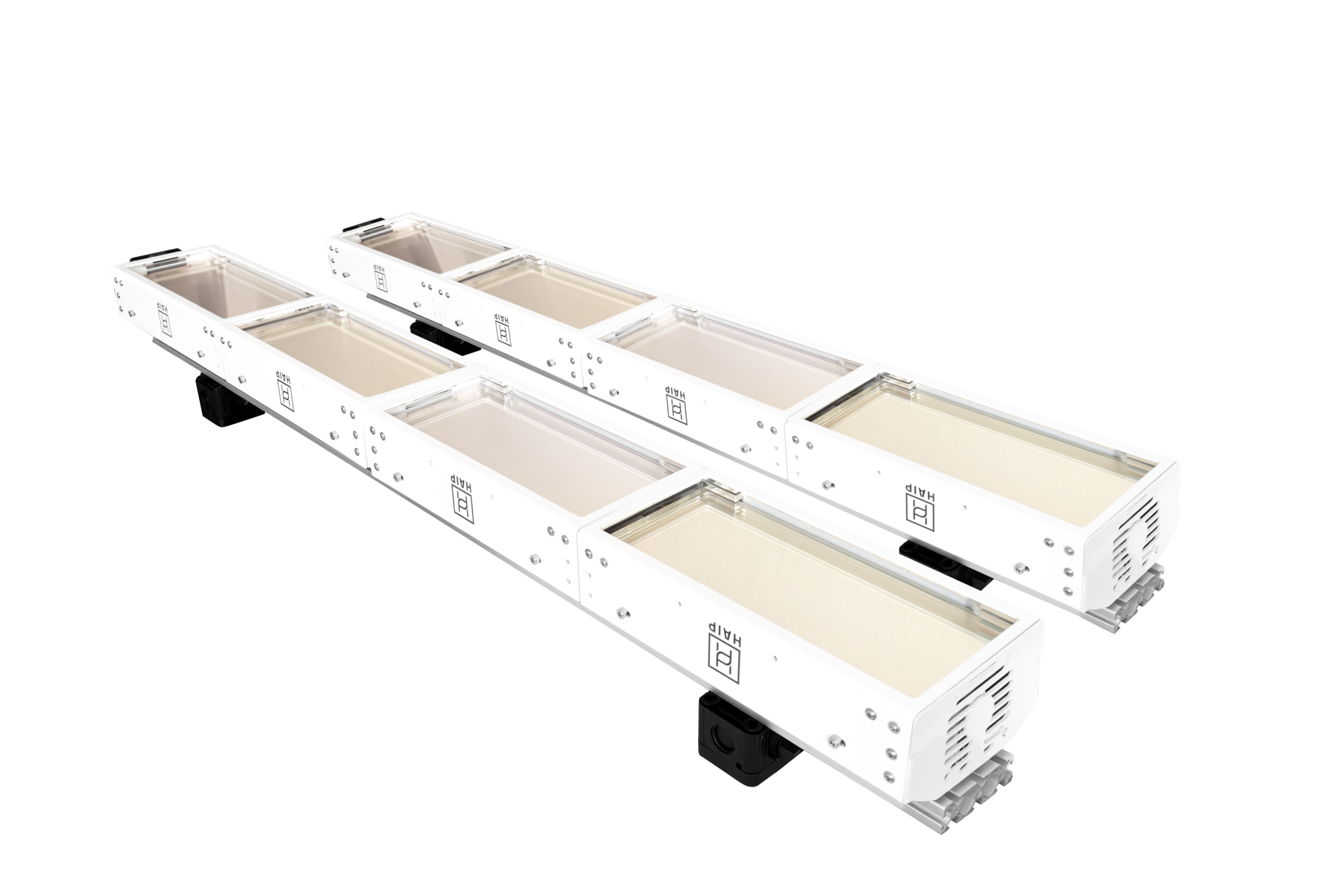

Deployable ToF requires repeatable performance and a clear path from evaluation to production. Therefore, Macnica ATD Europe partners with several leading technology suppliers to offer complete sensing solutions. Its partnership with Toppan Holdings across EMEA provides customers with direct access to the companies 3D ToF camera modules, like the SenSPure C11U, backed by Macnica’s expertise, distribution capabilities and technical support.

This partnership allows customers to access Toppan’s semiconductor-level sensing expertise and patented hybrid ToF technology, which enables Dynamic Ambient Light Suppression (DALS). This technique acquires both the modulated ToF signal and ambient light data within the same frame, allowing ambient light noise to be cancelled. The DALS technique furthermore enables accurate area sensing and object detection, not only indoors near a window but also outdoors (up to 100,000 lx). Conventional CW-iToF methods, on the other hand, require multiple samples of continuous wave light to calculate distance, making it susceptible to motion artifacts (noise caused by motion). In contrast, the hybrid ToF method adopts a different approach. Based on short pulse modulation, it emits a single pulse of light and captures its reflection in multiple time windows. Since distance can be calculated from a single frame, it suppresses motion artifacts, blurs and also enables operation at high frame rates. Furthermore the integrated Range Shift technique allows the working range to be flexibly adjusted based on the user’s environment and application, as well as HDR with no compromise on the frame rate. This offering supports robotics and smart infrastructure use cases such as spatial mapping, accurate detection, and gesture recognition, while reducing integration effort and accelerating time to deployment.