Sensor Fusion

Real Time Embedded Data Fusion for Automated Vehicles

Highly automated and connected vehicles of the future will have safe, robust and ultra-efficient computing systems. In today’s cars, they are called Advanced Driver Assistance Systems (ADAS) and are basically closed boxes with a dedicated set of sensors for a specific purpose. Adaptive Cruise Control (ACC) with long-range radar that helps maintain a safe distance with vehicles in front of the car is a popular example; so is Automatic Emergency Braking (AEB) with a LIDAR sensor that detects obstacles up to 100m in front of the vehicle.

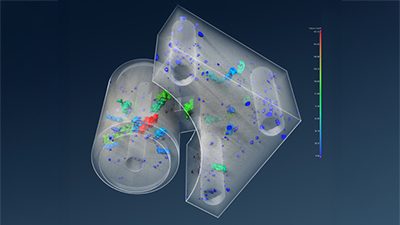

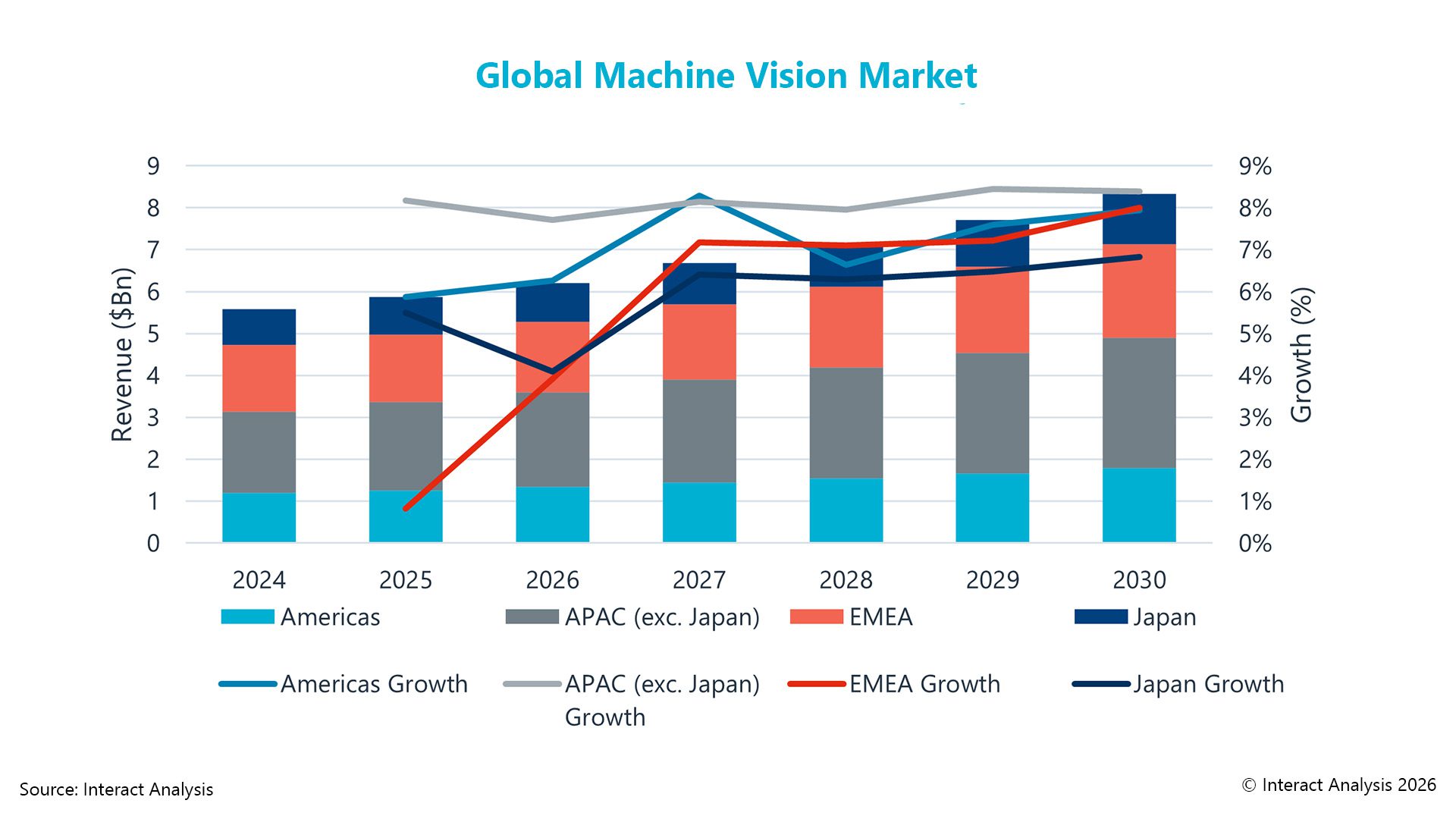

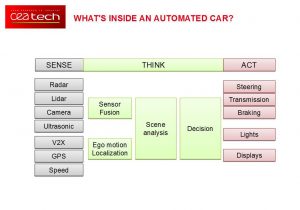

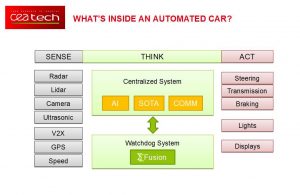

To achieve a higher level of automation, the car architecture is evolving from a distributed architecture, in which every ADAS function is implemented using a specific system, to a centralized architecture, in which all the sensors are connected to a central system. This central system can be compared to the vehicle’s brain. It monitors the entire vehicle and its surroundings and makes appropriate decisions to navigate safely through real-time traffic. To do this, the central system is connected to the vehicle’s entire set of sensors. It performs the environment perception in a demanding procedure called sensor fusion. In parallel, the vehicle state and its precise localization are computed from GPS data, speed monitors, and/or remote communication. Knowing precisely the environment and the vehicle states is crucial for comprehensive scene analysis: is the vehicle in the correct lane? where are other vehicles? are safety distances checked? etc. Based on this analysis, the central system decides how to operate the vehicle, including controlling its actuators.

Two major problems

Two major challenges are still preventing centralized computing architecture for autonomous drive to come to the market. First, the computing power required to perform all the required tasks in real-time is huge, and not yet available in commercial automotive electronic platforms. Second, the safety of the overall system has not been assessed. Safety issues still open to discussion include how should we cope with non-predictable systems involving artificial intelligence (AI), software-over-the-air (SOTA) updates, remote communications, and ? bugs? While the entire automotive and electronics industry is focused on answering the first challenge, Leti, a French technology research institute founded in 1967, is working on the second. Leti developed fusion (Sigma Fusion), an embedded sensor-fusion solution that is available for integration in highly automated vehicles. It allows fusing all sensor data in real time using a fully integrated embedded platform that is ready for automotive certification (ISO26262). Sigma fusion relies on a formally proven algorithm that provides accurate and precise control of the fusion process. The efficiency of the process is increased by a factor of one hundred (x100) compared to state-of-the-art implementations. It is the first solution that allows:

- • Low-cost, low-power and easy integration

- • Real-time performance on existing automotive platforms

- • Accurate and fast environment perception

- • Robustness and safety thanks to a patented, error-free computation process

It can be used as the core component of a watchdog system responsible for assessing the entire decision process of the central system in real time. Thanks to its efficiency and predictability features, it can be executed on existing automotive certified platforms, bringing trust and safety to autonomous driving systems with effective costs and minimal system overhead.

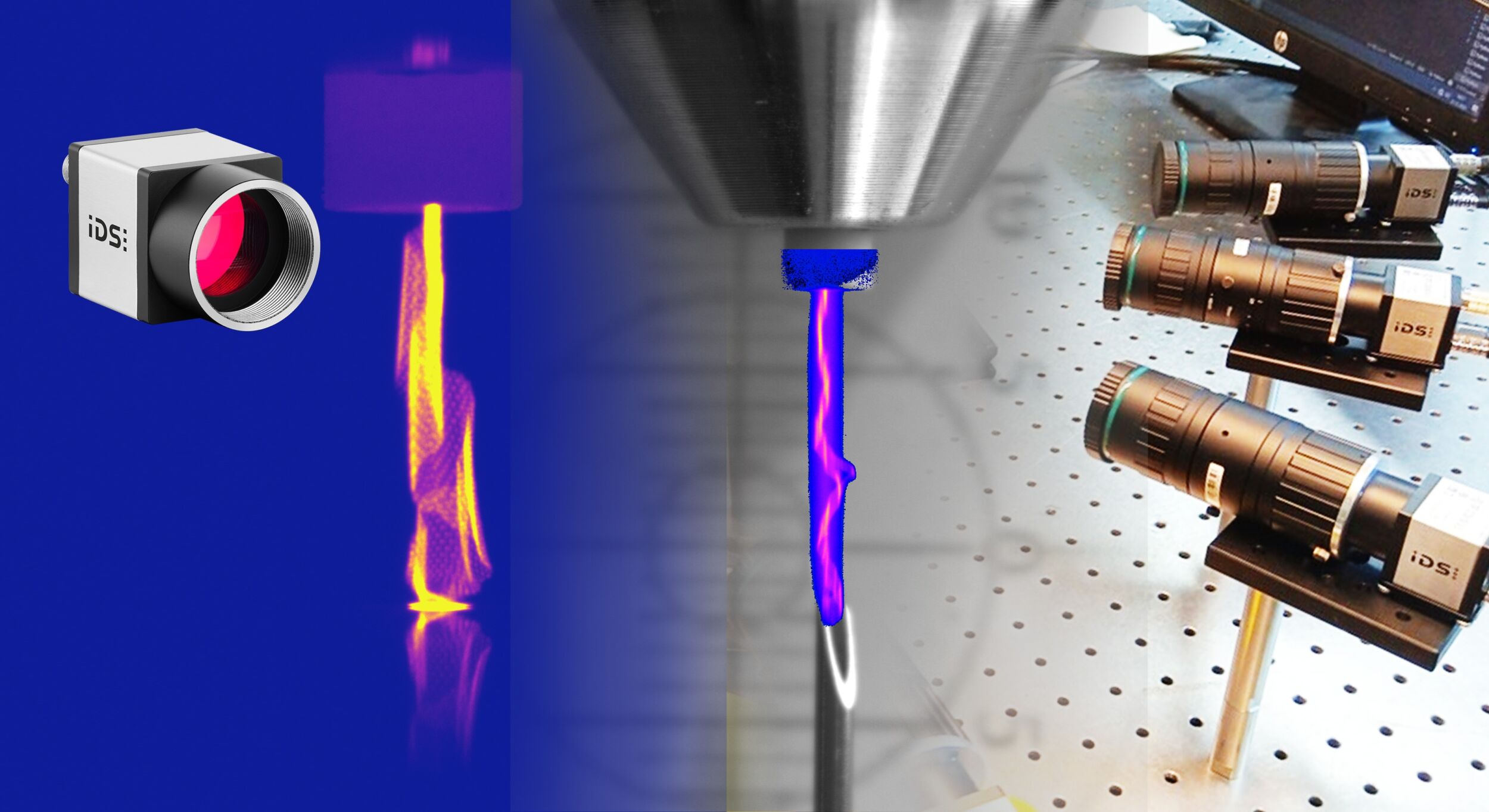

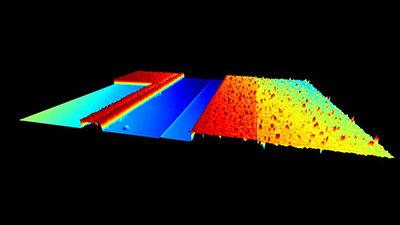

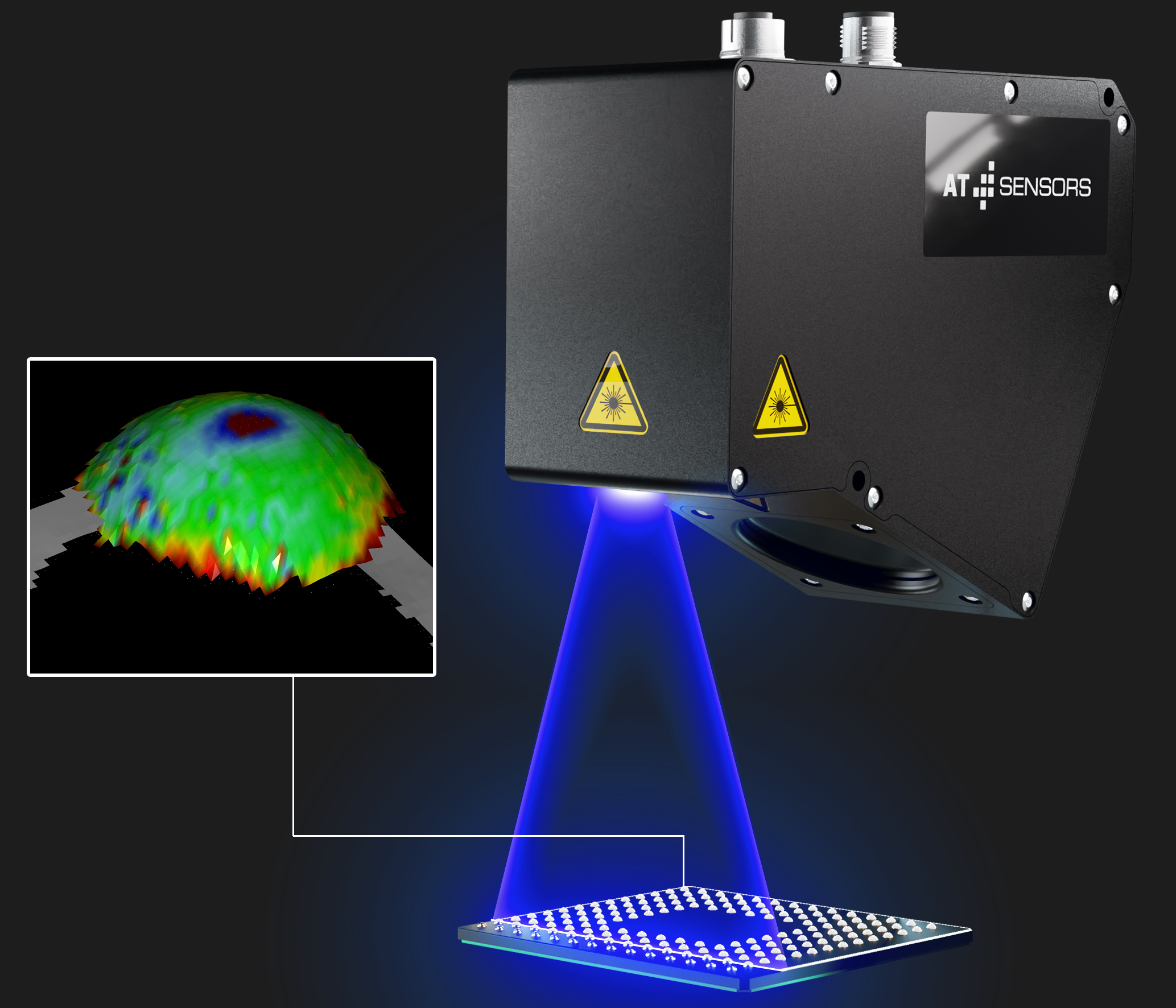

Data fusion of LiDAR sensors

In a demonstrator presented at VISION 2016 Sigma fusion was connected to two Velodyne LiDAR sensors (VLP16), and processed the fusion in real time on a single microcontroller. The platform used is based on an ARM Cortex-M7 operating at a 200MHz frequency. The data rate on each Velodyne ethernet link is 8MBits/sec, which corresponds to 300,000points/s. Sigma fusion has been designed to deal with a wide range of sensor technologies: 3D camera, stereo vision, radar, LiDAR, etc. Mixing heterogeneous sensing technologies helps to extend the perceptive field of the vehicle, to eliminate the blind spots and to cope with complex environment situations (e.g. fog, dark conditions, tunnels, etc). It will help make autonomous driving an industrial reality. By simultaneously addressing cost-and-safety constraints, it helps bridge the gap between futuristic robotic-car prototypes and mass market adoption.