Process Information from Vision

One topic which is of great interest to many companies in the industrial sector is how to use the multitude of data being captured across manufacturing processes to gain greater insights, productivity, or efficiency. A conceptual outline to value creation and capture is shown in figure 1. Horizontally, the chart shows the increase in data volume which is produced moving from measuring a single point on a single part, through multiple points on a part, to multiple batches, multiple tools, and ultimately multiple facilities. As all this data is captured, the opportunity for extracting more valuable insights increases much more rapidly than the data volume itself. For example, with a single measurement only a single point on a part, the only information available is that the individual point is OK which provides limited value. Conversely, if there is access to data from across the enterprise, valuable decisions can be made on whether entire process lines, factories, and enterprises are functioning as required.

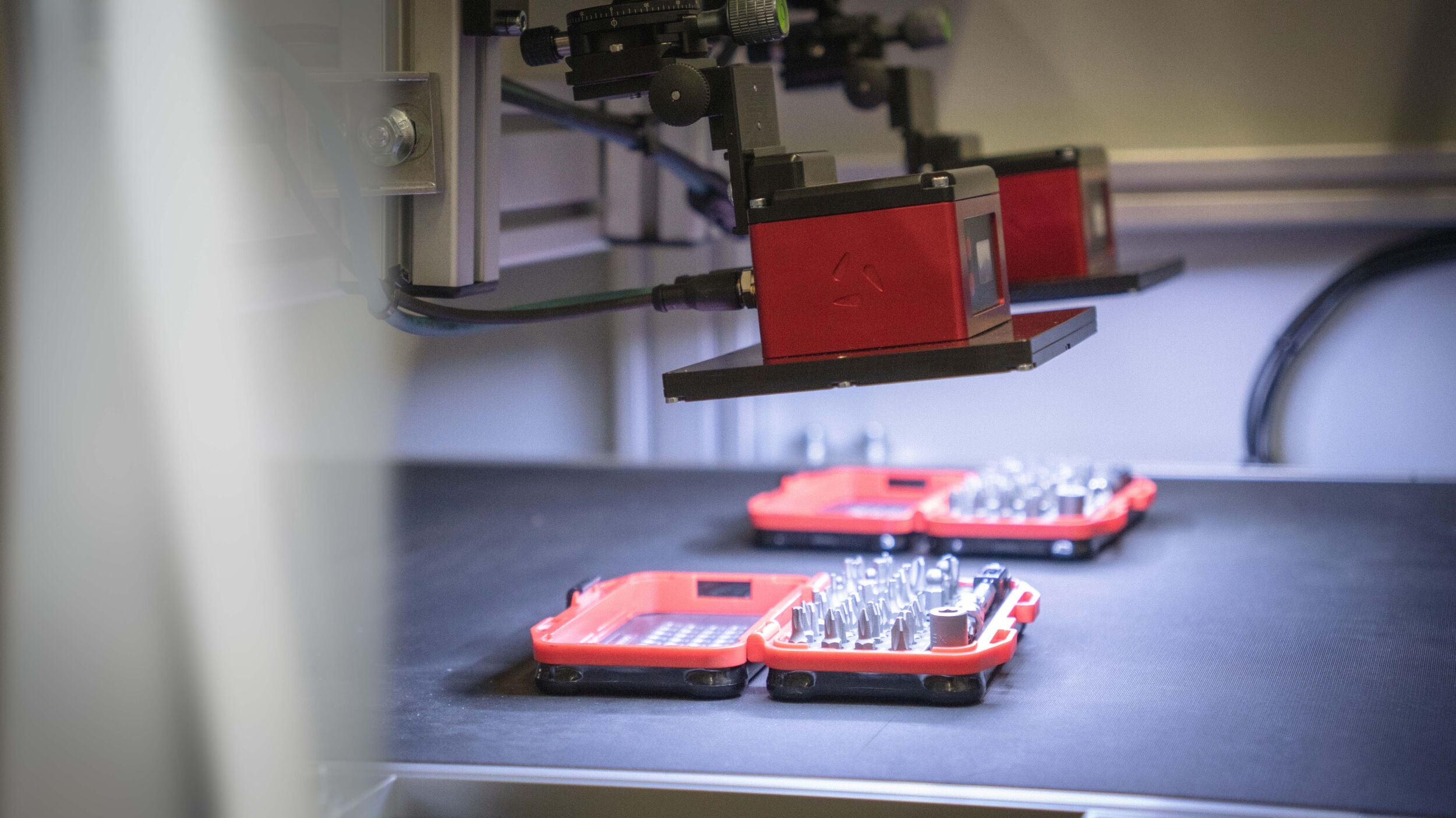

An important point is that the opportunity for value capture and value creation rests on the ability to have access to data from as many points across processes as possible, because the value creation opportunity increases dramatically with scale. Machine vision solutions often acts a primary data capture device, often in conjunction with other sensors, and it can be difficult to either own or have access to wider process data. However, several companies are looking at how they can better leverage and make use of the data which they have access to.

Although larger companies with a greater process coverage have a natural advantage, there are directions from earlier stage companies to use data from multiple locations our sources to generate greater value for their customers. For example, Eigen Innovations (www.eigen.io) in Canada, are seeking to directly address the access to additional data, by using data feeds from beyond their vision inspection systems and edge algorithms. By using multiple data sources, the intention is to be able to provide a much more accurate assessment of root cause effect or prediction of potential issues. Similarly, is Elementary Robotics (www.elementaryrobotics.com) which have recently launched an ‚Analyze‘ platform which is intended to provide advanced visualization and control of processes through exactly capture of data from multiple stations cross parts, products, batches, and tools.

AR/VR & Maintenance

Augmented & Virtual Reality applications are also of high visibility in both industrial and consumer markets with an important aspect that the AR/VR is intended for human, rather than machine, consumption. Within industry this is another area where CAD can be used as the underlying information to enable a vision inspection application. For example, companies such as Visometry (www.visometry.com), CDM Tech (www.cdmtech.de), and PTC (www.ptc.com) each provide software libraries and solutions which take an input CAD model as the ground truth and then use a video feed to identify a physical example of the object in the real world and track its position in 3D. Once an object is registered and tracked, multiple information overlays can be provided to the user including highlighting geometric deviations, missing sub-assemblies, or to provide information on hidden parts for training and maintenance operations. These solutions are mainly intended to be used to aid human inspection in a mobile environment, where 3D object detection relies on the motion of a camera contained on a mobile device. The detection and tracking algorithms are generally not based on neural networks and are heavily optimised to run on the available compute platforms in mobile devices to achieve real-time capability.

Another human-centric vision application is the identification of spare parts later during the product’s operational lifetime. For example, Nyris (www.nyris.io) have developed a visual search engine to accurately identify a part in the field. The starting point is a 3D CAD model, typically provided by the customer, which the Nyris software then uses as a basis for generating synthetic data images, which are used to train a neural network for part identification. Runtime inference is then deployed as a search capability through a software-as-a-service model, where the user may simply use a mobile phone to take an image of a part, which is then recognised by a network running in the cloud, which provides the correct part identification back to the user.

An interesting technical aspect which is important in the development of CAD to AI training, is the topic of material transfer. Typically, a CAD model will not contain a detailed description of how a part material or texture should look, potentially only identifying the material type. The area of style transfer to CAD models is an area which is under active development by many institutes and several companies to ensure the link between CAD model and physically realistic image, and it is likely that this topic will increase in visibility as the use of synthetic data becomes more widespread.